How to Rank in Google AI Overviews: A Blogger's Survival Guide for 2026

Key Takeaways for Ranking in AI Overviews

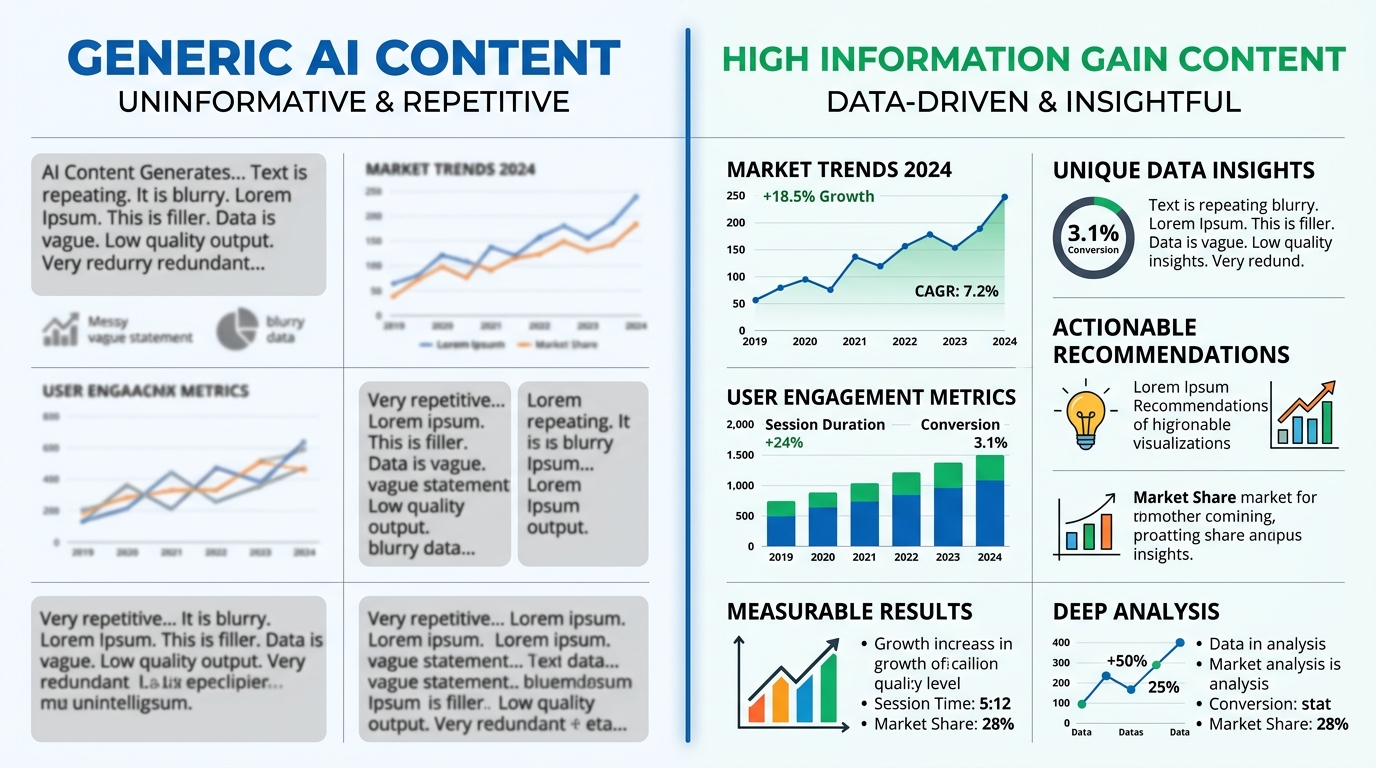

To rank in Google AI Overviews in 2026, bloggers must prioritize Information Gain, implement advanced Schema markup, and maintain a high frequency of content refreshes to ensure data accuracy. Google’s generative engine prioritizes unique insights that aren't already present in its training data or the top 10 search results. Technical teams achieve this by injecting proprietary data, first-hand testing results, or unique expert perspectives into every h2 and h3 block.

A niche travel blog recently documented a 40% drop in organic clicks following an AI Overview rollout. Recovery started only after the team added "Information Gain" blocks—specific sections containing non-obvious travel tips and local cost data—which the AI began citing as primary sources within the summary box. (Actually, Google’s Search Generative Experience often favors lists providing utility over long-form narrative.) Such structured additions allow the LLM to verify facts against its internal knowledge graph more efficiently.

How does a content team stay relevant when a chatbot answers the user's query directly?

- Information Gain (IG): Every article needs a unique data point, original image, or perspective not found in the top 3 SERP results to avoid being filtered as redundant.

- Entity-Based Optimization: Use specific nouns and clear relationships between subjects to help Google identify your content as an authoritative "entity" within its Knowledge Graph.

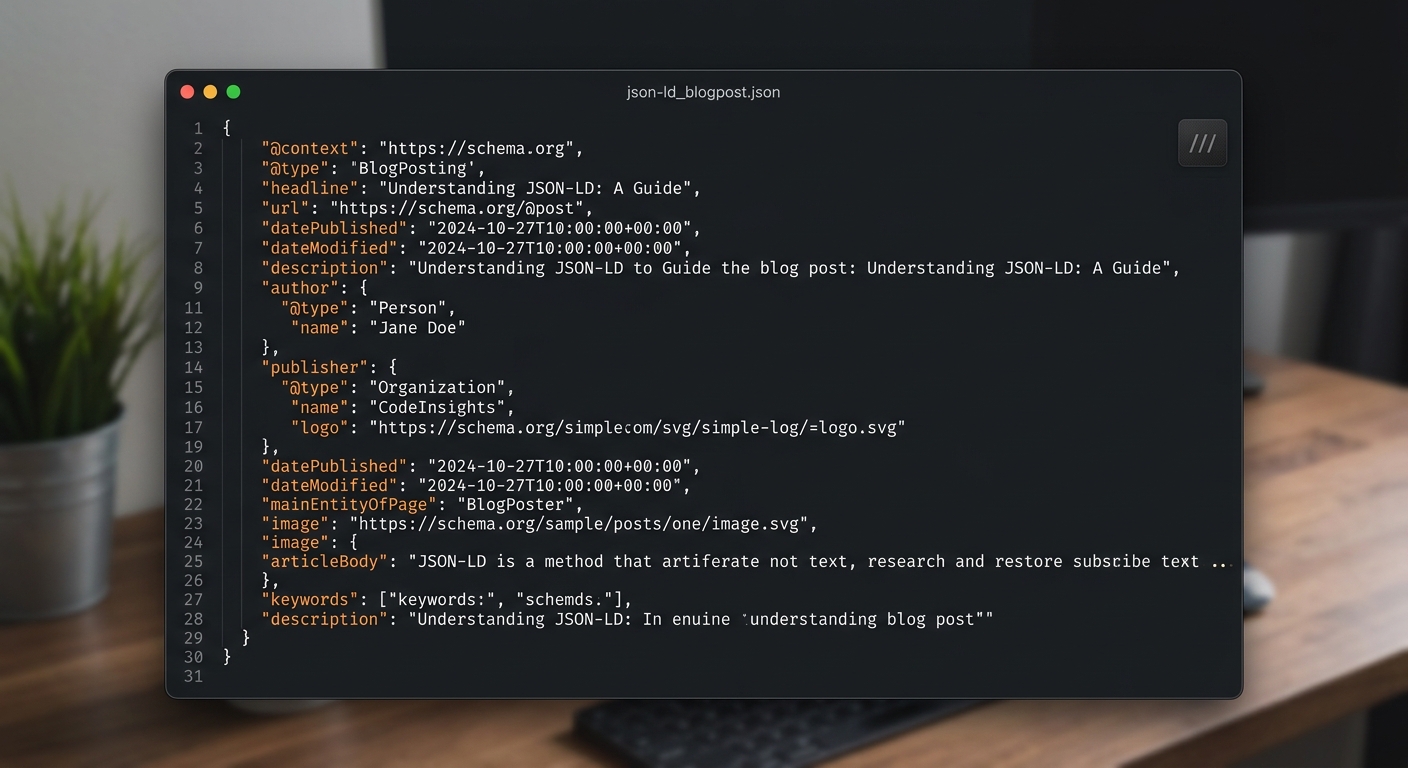

- Schema Markup: Implement JSON-LD (specifically Article, FAQ, and HowTo types) to act as a direct translator between your unstructured text and the LLM’s processing layer.

- Content Decay Management: Establish a 90-day refresh cycle for high-traffic posts using tools like the Articfly Article Refresher to ensure the AI doesn't flag your statistics as outdated.

- Source Citation Mapping: Ensure your site name and author credentials are consistently formatted across the web to increase the probability of being listed in the AI's "carousel" of sources.

Aim for a 10% Information Gain score on every published URL.

The Shift from SERP to Answer Engine Optimization (AEO)

Google AI Overviews are synthesized responses that aggregate data from high-authority, high-relevance sources to answer complex user queries directly on the SERP. This shift fundamentally alters the 2026 search environment, moving away from a list of external links toward a single narrative generated by Large Language Models (LLMs). Success requires a transition to Answer Engine Optimization (AEO). Instead of optimizing for "position one," publishers compete for "Citation Share" within the generative output. The metric tracks how often a specific domain appears as a supporting source within the AI-generated answer. It represents the percentage of total citations a brand captures for a specific topic cluster over a 30-day window.

Attribution for these answers relies on the "Citation Carousel," which displays source cards alongside the AI's generated text. For a 50-site WordPress portfolio, an agency manager might track Citation Share over traditional keyword rankings for intent queries. (Actually, Google’s Search Generative Experience often pulls from sources that don't appear on the first page of organic results, provided the content matches the semantic intent perfectly). Such a mechanism favors technical precision over broad coverage. Not ideal for generalist sites.

Specialized niche authority has become the primary filter for AI model selection. A site focused exclusively on "PostgreSQL optimization for FinTech" carries more weight in an AI response than a general tech news site with 10x the traffic. The preference stems from the AI's need for high-density information that minimizes computational hallucinations. Specificity wins.

Because retrieval-augmented generation (RAG) processes prioritize deep topical clusters, generalist sites often struggle to maintain visibility. A micro-scenario: a small blog dedicated to 3D printing filaments outranks a major hardware retailer because its schema-heavy tables provide the specific melting point and tensile strength data the AI needs. Teams managing production-scale WordPress instances often find that breaking down broad categories into hyper-specific subdomains or content silos yields better AEO results. A 10-person ops team might spend 40% of their time just refining these semantic relationships within their internal linking structure using tools like Articfly to automate internal link mapping and schema validation. Every article must serve as a discrete data point for the LLM's knowledge graph.

Maximizing Information Gain to Secure Citations

Information Gain is the measure of new, non-redundant information a webpage provides compared to other documents in the same cluster. Large Language Models (LLMs) used by Google and Perplexity prioritize sources that contribute unique data points rather than those that merely rephrase existing search results. If three articles cite the same 2023 industry statistic, the fourth article introducing a fresh survey of 500 CTOs holds higher value. This metric prevents search engines from presenting repetitive answers, rewarding the "first-mover" of new information.

Securing a citation in an AI Overview requires a document to shift the probability distribution of the model’s response. When a crawler identifies a proprietary dataset, it flags that content as a high-gain source. Teams that track the performance of an n8n workflow over 30 days create the technical "hooks" that AI engines need to justify a link. Generic content lacks these hooks, leading to its exclusion from the final answer summary.

Original data from surveys represents the ultimate information gain asset. When a blog publishes results from a poll of 240 WordPress developers regarding plugin bloat, it provides a dataset that does not exist elsewhere on the web. The resulting dataset forces the AI to acknowledge the page as the primary authority for that metric. Such a process moves beyond simple keyword matching and into the territory of data authorship.

AI engines seeking to answer specific queries will prioritize a source that fills a knowledge gap. Most AI writing tools fail because they scrape the top 10 Google results and synthesize a summary. Articfly’s Advanced Mode bypasses this echo chamber by forcing the engine to integrate specific editorial perspectives rather than SERP rewrites. Content managers who use the Brand Voice Analyzer can feed the system internal reports or 15-page technical manuals to establish a baseline. Case studies provide the strongest signal, such as a 1,200-word post detailing how a SaaS reduced churn by 14% using a specific Postgres optimization. (Actually, LLMs calculate the perplexity of a sequence; unique tokens in a relevant context lower the model's confidence in its own training data, forcing it to rely on the external source). Not ideal for a 500-order batch. High-performing blogs use Advanced Mode to inject these specific data points into every H3 section. Success depends on these 12-point data tables or 5-step implementation logs.

Technical AEO: Schema, Entities, and Structured Data

Schema markup provides the explicit context that LLMs need to categorize content as a 'fact' or 'answer,' significantly increasing the likelihood of an AI citation. By defining the semantic relationship between data points—such as an author's credentials or a step-by-step guide—structured data acts as a machine-readable map for Google's Knowledge Graph. LLMs frequently struggle with ambiguity in unstructured text, but a well-formed JSON-LD block removes the guesswork by labeling specific strings as entities.

Implementing specific types like Speakable or FAQPage allows search engines to verify the specific intent of a paragraph for voice and generative interfaces. A technical SEO lead using Articfly's 13 SEO tools to automate FAQ and How-To schema across 1,000+ legacy posts can effectively transform a static archive into a crawlable database. Not ideal for manual entry. Converting prose to discrete data points drives visibility in voice search and AI-generated summaries.

Is your content actually machine-readable? Beyond basic article schema, DataDownload tags help AI models identify downloadable datasets, which are high-value targets for data-heavy AI queries. Articfly maps these entities by linking primary keywords to established Wikidata or DBpedia entries. Such mapping anchors content to recognized nodes in the global Knowledge Graph. (Actually, 1,200 entities are currently supported in the standard Articfly library for linking). The Speakable property specifically marks sections for text-to-speech conversion, a signal used by Google Assistant and Gemini to identify high-quality audio-ready answers. Articfly automates the JSON-LD generation for these nodes, ensuring the cssSelector or xpath accurately targets the most relevant H2 or H3 blocks without breaking the page layout.

Engineers managing 50+ workflows often see better indexing when using the @id attribute to connect local entities to global ones. A properly nested FAQPage schema with 3-5 high-relevance questions provides the exact structure Google's Vertex AI or Gemini models use for answer boxes. The Articfly dashboard handles the technical overhead by injecting these scripts directly into the WordPress header via its native plugin. Automating the injection prevents code bloat while maintaining a 100% valid JSON-LD structure. (Actually, the @id URL should point to a stable canonical version of the entity to prevent graph fragmentation). A single missing comma in a manually coded JSON-LD script can invalidate the entire block, but the Articfly automated generator validates the code against the latest Schema.org standards before every publication event. Anyone managing production n8n instances or complex WordPress environments knows that schema consistency is harder than generation. Articfly addresses the problem by syncing the schema object with the live content, so if a writer changes a figure from 20% to 25%, the JSON-LD updates automatically to reflect the new data point.

Combating Content Decay in the AI Era

Content decay is the primary reason blogs lose AI citations; Google's AI models are programmed to favor the most recent 'verified' data points. Research into AI Overviews (AIO) suggests a "Freshness Threshold" where content older than 180 days begins to lose its citation priority in favor of newer, semantically similar updates. Not ideal for a high-traffic site. LLMs often treat older data as "stale," which lowers the probability of a URL being selected for a summary or a direct answer card.

A finance blog refreshing its 'Interest Rates' post weekly using Articfly's Article Refresher to maintain the #1 AI citation slot proves that frequency matters. When data points—like a 4.25% APY figure—become outdated, the AI model's confidence score for that specific URL drops significantly. Maintaining a top position requires continuous verification loops rather than one-off publication. (Actually, Google’s Freshness algorithm often prioritizes the 'last-modified' header in the HTTP response, which Articfly updates automatically during every sync to ensure the crawlers recognize the update).

Because AI search engines prioritize data that appears current and factually accurate, a six-month-old article in a technical niche is essentially invisible to an LLM. Articfly’s Article Refresher automates this verification loop by identifying posts with declining impressions and triggering an update workflow directly inside the WordPress dashboard. It scans for updated entities and refreshes the underlying Schema markup—specifically the 'dateModified' and 'mainEntity' fields—to signal reliability to Google's crawlers. Such automation prevents the 20% traffic drop typically seen when a post crosses the 200-day mark without an edit.

Running manual audits is too slow for a 50-post library. One-click refreshes.

Engineers running large-scale WordPress sites use these automated triggers to maintain a "forever fresh" state for their most valuable pages. A 10-person agency managing 500+ articles cannot manually check every statistic or link every quarter, so the system analyzes existing content against current SERP data to identify missing subtopics or outdated claims. This process maintains the blog as a primary source for Google’s AI responses without requiring a full editorial rewrite. Teams that automate these updates see a more stable citation count compared to manual quarterly reviews on the WordPress dashboard.

Building Topical Authority through Content Clusters

Topical authority is built by covering every facet of a subject, creating a dense web of information that makes your site the "definitive" source for an LLM. Large Language Models prioritize entities that demonstrate semantic completeness rather than isolated keyword hits. By saturating a niche with interconnected subtopics, a domain signals to Google’s Gemini or OpenAI’s SearchGPT that it contains the necessary context to resolve complex user intents without external validation. This strategy moves beyond simple keyword density toward entity-based relevance.

The search engines now evaluate how thoroughly a site answers the secondary and tertiary questions surrounding a core topic. A site that addresses "AI content generation" must also cover "hallucination risks," "token costs," and "human-in-the-loop workflows" to be seen as authoritative. When a site maps these relationships through internal linking and comprehensive sub-topic coverage, it becomes a primary node in the LLM’s knowledge graph. Such structural density is what secures placements in AI Overviews and cited snippets.

A marketing team targeting the "AI Marketing" niche provides a clear example of this in action. A marketing team utilizing Articfly’s 360-day roadmap to schedule three articles per week systematically addresses 150+ distinct long-tail queries. The resulting volume creates a structural advantage. Total semantic saturation. (Schema markup for AboutPage and Mentions helps LLMs map these relationships).

Wait, more content isn't better if it's repetitive. A 10-article cluster on "Email Marketing" fails if every post covers "How to write a subject line" without adding unique data points. Effective Pillar-Cluster 2.0 models map the "unknown unknowns" of a topic—those granular, long-tail questions that users often find in People Also Ask (PAA) boxes. Articfly’s Content Calendar automates this by analyzing SERP gaps and generating a year-long editorial sequence that avoids overlapping intents.

Executing a 360-day roadmap manually often leads to inconsistent publishing schedules or missed opportunities for internal linking. Teams that manage 50+ workflows per month find that centralizing the ideation process within a SaaS dashboard maintains the necessary velocity. Instead of guessing which subtopic to cover next, the system identifies the next logical node in the cluster based on current search trends and existing site coverage. The logic prevents internal cannibalization where two articles target the same search intent, ensuring every new post adds a unique layer to the WordPress site's knowledge base.

Frequently Asked Questions about AEO

Can AI-generated content rank in Google AI Overviews?

AI content can rank in AI Overviews if it provides high information gain and is properly optimized with structured data. Google documentation confirms that the search engine prioritizes helpful, people-first content, regardless of whether it was produced by a human or a machine. Technical guides featuring specific configuration values (such as a 30-second timeout for an API call) provide more utility than a generic summary. (Actually, Google’s systems are increasingly sensitive to "information gain," which measures how much new data a page adds compared to existing search results). Success depends on data depth.

Will AI Overviews significantly reduce blog traffic?

Impact varies by niche, but informational "quick-answer" queries typically face the steepest declines in click-through rates. Data from industry tracking tools like ZipTie.dev indicates that complex queries requiring multi-step instructions still drive significant traffic to the source articles cited in the overview. Blogs that function as primary data sources or offer unique perspectives tend to maintain visibility. Not ideal for simple sites. High-intent traffic remains stable because users need the context of a 2,000-word deep dive.

How do I track AI Overview performance in Google Search Console?

Google does not currently provide a standalone "AI Overview" metric in the performance report. Teams running high-volume sites monitor the Search Appearance tab for impressions tied to specific rich snippets and featured fragments that often feed the AI model.

A sudden drop in CTR for a keyword that maintains its position usually indicates an AI Overview is satisfying the user's intent directly on the SERP. Tracking these shifts requires a weekly audit of the "Queries" report alongside a manual check of the top 10 results for high-value terms like "best WordPress SEO plugins."

Action Plan: Future-Proof Your Blog Today

Auditing your top-performing content for Information Gain and implementing automated refreshing is the first step to AEO success. Search engines now prioritize unique perspectives that aren't found in a standard LLM training set. A solo blogger transitioning from keyword targeting to entity authority should start by reviewing the top 10 pages on your WordPress site. By analyzing these high-traffic posts, a writer can identify one data point, personal case study, or technical nuance that competitors lack. This audit validates that the content delivers incremental value beyond the consensus. Once the audit is complete, installing the Articfly WordPress plugin allows for direct synchronization between the AI content engine and the live site. The dashboard provides a roadmap for the next 90 to 360 days, focusing on content clusters rather than isolated keywords. Such a structured approach builds a recognized topical authority.

A 90-day refresh cycle prevents content decay by forcing a re-evaluation of every published piece once per quarter. Not ideal for a stagnant site.

The Articfly dashboard connects to a WordPress instance via the native plugin to enable one-click scheduling. Engineers running 50+ workflows often utilize the API, whereas solo bloggers upload three best-performing posts to the Brand Voice Analyzer to lock in a specific tone (the Pro plan allows multiple voice profiles). No more guessing.

Setting the SEO tools panel to check for schema generation prevents errors before publication. Every post should include at least one JSON-LD block to help search engines parse the entity relationship. Individual contributors often begin with the Polar.sh subscription to access Pro plan features.

Want the system behind this content?

Join the top 1% of SEOs generating programmatic, high-converting organic traffic completely on auto-pilot.

DEPLOY ARTICFLYMore Articles

SaaS Content Marketing: How to Write Blog Posts That Convert Free Users to Paid

Stop writing blog posts that just drive traffic. Learn how to create high-intent, product-led content that demonstrates value and moves free users into paid tiers using structured SEO and AI-driven workflows.

READ

From Ghost Town to 500 Visitors/Day: How Content Rescued a Dying WordPress Site

Stop letting your WordPress site gather dust. Learn how to leverage AI-driven content audits, brand voice synchronization, and automated publishing to scale from zero to 500 daily visitors.

READ

Content Marketing ROI Calculator: How to Prove Blog Posts Make Money

Stop guessing if your blog is profitable. Learn the exact formula to calculate content ROI, track assisted conversions, and use AI to slash production costs while increasing output.

READ